"dist:win64": "npm run bundle & electron-builder -w -圆4 & node. dist/sabaki-vx.x.x-win-ia32-setup.exe ", "dist:win32": "npm run bundle & electron-builder -w -ia32 & node. "dist:arm64": "npm run bundle & electron-builder -l -arm64 ", dist/sabaki-vx.x.x-linux-ia32.AppImage & node. "dist:linux": "npm run bundle & electron-builder -l -ia32 -圆4 & node. "dist:macos": "npm run bundle & electron-builder -m -圆4 -arm64 ", "watch": "concurrently \"webpack -mode development -watch \" \"npm run watch-format \" ", "copyright": "Copyright © 2015-2022 Yichuan Shen ", Agents could be wrapped in an HTTP server,įor instance, and connect against a web interface so humans can play their bots."description": "An elegant Go/Baduk/Weiqi board and SGF editor for a more civilized age. Building a demo with a user interface would be nice.Would be beneficial to get started and see reasonable results from the start. Running a larger experiment and storing the weights somewhere freely accessible to users.The basics are covered, but there are potentially many This should be refactored into an iterator that only provides you with the next batch Experience collectors build one large ND4J array, which won’t work for large experiments.ScalphaGoZero can be improved in many ways, here are a few examples: The simulation stores experience data and lets your agents learn from it, so they The last piece needed to run your own AlphaGo Zero is to create a simulation between two ZeroAgent That can be used for training the agents. When opponents play many games against each other, they generate game play data, or experience, Includes territory estimation and reporting game results. Who won and reinforce the signals leading to victory (and weaken those leading to defeat). To play actual games, agents need the ability to estimate scores at the end of a game to decide The right shape can be used within this framework. Each model that takes encoded states and outputs To start with, you might want to work with simpler models. In AlphaGo Zero both of theseĬomponents are integrated into one deep neural network, with a so called policy and value head. To select a move, agents need machine learning models to predict the value of the current position (value function)Īnd how well a next move would probably work (policy function). For AlphaGo Zero you need a ZeroAgent,īut other agents with simpler methodology can also lead to decent results. AlphaGo Zero needs a specific ZeroEncoder,īut many other encoders are feasible and can be implemented by the user.Ī Go-playing agent knows how to play a game, by selecting the next move, and handles game state information Use for training and predictions, namely tensors. Game states and moves need to be translated into something a neural network can Is implemented in the Go board class to speed up computation. Basics: To let a computer play a game you need to code the basics of the game, including.For black, or white, or both, select the ScalphaGoZero engine that you just added and play the game. Many of the concepts used here can be reused for other games, only the basics are Quite a few concepts are needed to build an AlphaGoZero system, ScalphaGoZero is intendedĪs a software developer friendly approach with clean abstractions to help users get

When running, inofrmation and errors are logged to output.log. It is models/model_size_5_layers_2.model. Currently, there is only a model file for 5x5 games.

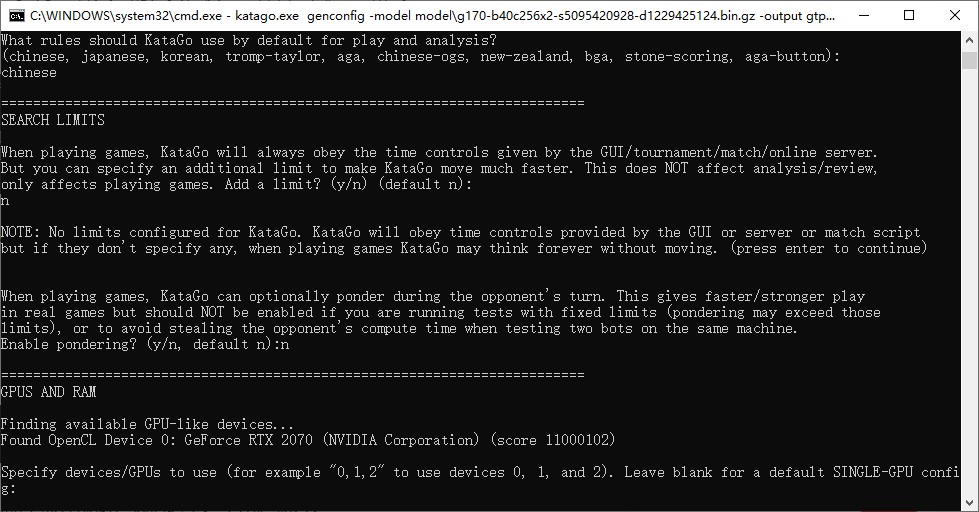

It will attempt to load the model file from the models directory based on the board size specified. Players use the engine that you just added. This allows you to configure a new game and have one or both Go to Engines | Manage Engines in the top menu, then add a ScalphaGoZero engine as shown here. However, you should use the gtpClient.bat (on Windows) when configuring Sabaki. Java -jar target/scala-2.12/ScalphaGoZero-assembly-1.0.1.jar gtp

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed